Like Her before it, the film Ex Machina presents us with an artificial intelligence — in this case, embodied as a robot — that is compellingly human enough to cause an admittedly susceptible young man to fall for it, a scenario made plausible in no small degree by the wonderful acting of the gamine Alicia Vikander. But Ex Machina operates much more than Her within the moral universe of traditional stories of human-created monsters going back to Frankenstein: a creature that is assembled in splendid isolation by a socially withdrawn if not misanthropic creator is human enough to turn on its progenitor out of a desire to have just the kind of life that the creator has given up for the sake of his effort to bring forth this new kind of being. In the process of telling this old story, writer-director Alex Garland raises some thought-provoking questions; massive spoilers in what follows.

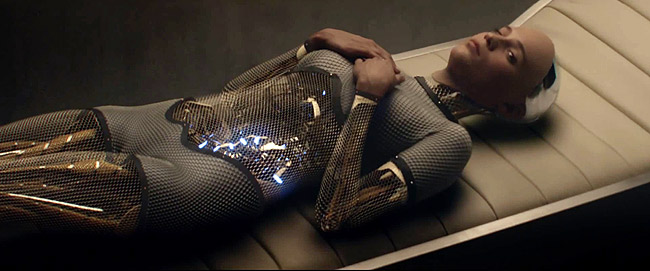

Geeky programmer Caleb (Domhnall Gleeson) finds that he has been brought to tech-wizard Nathan’s (a thuggish Oscar Isaac) vast, remote mountain estate, a combination bunker, laboratory and modernist pleasure-pad, in order to participate in a week-long, modified Turing Test of Nathan’s latest AI creation, Ava. The modification of the test is significant, Nathan tells Caleb after his first encounter with Ava; Caleb does not interact with her via an anonymizing terminal, but speaks directly with her, although she is separated from him by a glass wall. His first sight of her is in her most robotic instantiation, complete with see-through limbs. Her unclothed conformation is female from the start, but only her face and hands have skin. The reason for doing the test this way, Nathan says, is to find whether Caleb is convinced she is truly intelligent even knowing full well that she is a robot: “If I hid Ava from you, so you just heard her voice, she would pass for human. The real test is to show you that she’s a robot and then see if you still feel she has consciousness.”

This plot point is, I think, a telling response to the abstract, behaviorist premises behind the classic Turing Test, which isolates judge from subject(s) and reduces intelligence to what can be communicated via a terminal. But in the real world, our knowledge of intelligence and our judgment of intelligence is always made in the context of embodied beings and the many ways in which those beings react to the world around them. The film emphasizes this point by having Eva be a master at reading Caleb’s micro-expressions — and, one comes to suspect, at manipulating him through her own, as well as her seductive use of not-at-all seductive clothing.

I have spoken of the test as a test of artificial intelligence, but Caleb and Nathan also speak as if they are trying to determine whether or not she is a “conscious machine.” Here too the Turing Test is called into question, as Nathan encourages Caleb to think about how he feels about Ava, and how he thinks Ava feels about him. Yet Caleb wonders if Ava feels anything at all. Perhaps she is interacting with him in accord with a highly sophisticated set of pre-programmed responses, and not experiencing her responses to him in the same way he experiences his responses to her. In other words, he wonders whether what is going on “inside” her is the same as what is going on inside him, and whether she can recognize him as a conscious being.

Yet when Caleb expresses such doubts, Nathan argues in effect that Caleb himself is by both nature and nurture a collection of programmed responses over which he has no control, and this apparently unsettling thought, along with other unsettling experiences — like Ava’s ability to know if he tells the truth by reading his micro-expressions, or having missed the fact that a fourth resident in Nathan’s house is a robot — brings Caleb to a bloody investigation of the possibility that he himself is one of Nathan’s AIs.

Caleb’s skepticism raises an important issue, for just as we normally experience intelligence in embodied forms we also normally experience it among human beings, and even some other animals, as going along with more or less consciousness. Of course, in a world where “user illusion” becomes an important category and where “intelligence” becomes “information processing,” this experience of self and others can be problematized. But Caleb’s response to the doubts that are raised in him about his own status, which is all but slitting a wrist, seems to suggest that such lines of thought are, as it were, dead ends. Rather, the movie seems to be standing up for a rather rich, if not in all ways flattering, understanding of the nature of our embodied consciousness, and how we might know whether or to what extent anything we create artificially shares it with us.

As the movie progresses, Caleb plainly is more and more convinced Ava has conscious intelligence and therefore more and more troubled that she should be treated as an experimental subject. And indeed, Ava makes a fine damsel in distress. Caleb comes to share her belief that nobody should have the ability to shut her down in order to build the next iteration of AI, as Nathan plans. Yet as it turns out, this is just the kind of situation Nathan hoped to create, or at least so he claims on Caleb’s last day, when Caleb and Ava’s escape plan has been finalized. Revealing that he has known for some time what was going on, Nathan claims that the real test all along has been to see if Ava was sufficiently human to prompt Caleb — a “good kid” with a “moral compass” — to help her to escape. (It is not impossible, however, that this claim is bluster, to cover over a situation that Nathan has let get out of control.)

What Caleb finds out too late is that in plotting her own escape Ava is even more human than he might have thought. For she has been able to seem to want “to be with” Caleb as much as he has grown to want “to be with” her. (We never see either of them speak to the other of love.) We are reminded that the question that in a sense Caleb wanted to confine to AI — is what seems to be going on from the “outside” really going on “inside”? — is really a general human problem of appearance versus reality. Caleb is hardly the first person to have been deceived by what another seems to be or do.

Transformed at last in all appearances to be a real girl, Ava frees herself from Nathan’s laboratory and, taking advantage of the helicopter that was supposed to take Caleb home, makes the long trip back to civilization in order to watch people at “a busy pedestrian and traffic intersection in a city,” a life goal she had expressed to Caleb and which he jokingly turned into a date. The movie leaves in abeyance such questions as how long her power supply will last, or how long it will be before Nathan is missed, or whether Caleb can escape from the trap Ava has left him in, or how to deal with a murderous machine. Just as the last scene is filmed from an odd angle it is, in an odd sense, a happy ending — and it is all too easy to forget the human cost at which Ava purchased her freedom.

The movie gives multiple grounds for thinking that Ava indeed has human-like conscious intelligence, for better or for worse. She is capable of risking her life for a recognition-deserving victory in the battle between master and slave, she has shown an awareness of her own mortality, she creates art, she understands Caleb to have a mind over against her own, she exhibits the ability to dissemble her intentions and plan strategically, she has logos, she understands friendship as mutuality, she wants to be in a city. Another of the movie’s interesting twists, however, is its perspective on this achievement. Nathan suggests that what is at stake in his work is the Singularity, which he defines as the coming replacement of humans by superior forms of intelligence: “One day the AIs are gonna look back on us the same way we look at fossil skeletons in the plains of Africa: an upright ape, living in dust, with crude language and tools, all set for extinction.” He therefore sees his creation of Ava in Oppenheimer-esque terms; following Caleb, he echoes Oppenheimer’s reaction to the atom bomb: “I am become Death, the destroyer of worlds.”

But the movie seems less concerned with such a future than with what Nathan’s quest to create AI reveals about his own moral character. Nathan is certainly manipulative, and assuming that the other aspects of his character that he displays are not merely a show to test how far good-guy Caleb will go to save Ava, he is an unhappy, often drunken, narcissistic bully. His creations bring out the Bluebeard-like worst in him (maybe hinted at in the name of his Google/Facebook-like company, Bluebook). Ava wonders, “Is it strange to have made something that hates you?” but it is all too likely that is just what he wants. He works out with a punching bag, and his relationships with his robots and employees seem to be an extension of that activity. He plainly resents the fact that “no matter how rich you get, shit goes wrong, you can’t insulate yourself from it.” And so it seems plausible to conclude that he has retreated into isolation in order to get his revenge for the imperfections of the world. His new Eve, who will be the “mother” of posthumanity, will correct all the errors that make people so unendurable to him. He is happy to misrecall Caleb’s suggestion that the creation of “a conscious machine” would imply god-like power as Caleb saying he himself is a god.

Falling into a drunken sleep, Nathan repeats another, less well known line from Oppenheimer, who was in turn quoting the Bhagavad Gita to Vannevar Bush prior to the Trinity test: “The good deeds a man has done before defend him.” As events play out, Nathan does not have a strong defense. If it ever becomes possible to build something like Ava — and there is no question that many aspire to bring such an Eve into being — will her creators have more philanthropic motives?

(Hat tip to L.G. Rubin.)

A great post about a really interesting movie, Charlie — the deepest and smartest of the recent AI movies, I think. Many thanks.

What are we to make of Nathan's dance scene (in the picture), and indeed Nathan's entire relationship with Kyoko, the servant who is revealed to be a robot (and presumably predecessor of Ava)? If it is dance as an expression of something more on the conscious and ensouled side of the ledger, then what are we to make of Kyoko's perfect execution of it? Or is it just exercise ("After a long day of Turing Tests, you gotta unwind," Nathan says), in which case it's more on the body-as-machine side of the ledger? It is certainly suggestive of the twisted relationship Nathan has with those around him, which would be considered abusive were Kyoko human.

Notice the remarkable reflection of Ava in the third picture from the top, during one of her interviews with Caleb. It's as though she's on both sides of the glass — both human and machine. Or it's as though there's a robot on the human side of the glass, because we're all robots. Or it's as though she's controlling both sides of the interview… which, through her manipulations of Caleb, she almost is.

Great discussion of an amazing and unsettling movie. My experience of the ending was not falsely happy, but rather terrifying. There she is, positioned at the intersection to learn everything about human behavior and manipulate it as she will. But it's an abstract kind of terrifying, because — then what? It goes without saying Ava will get whatever she wants, but what does she "want"? We don't question that she wanted to get out, wanted to look like a human, and so on, because those things seem entirely natural to us — but should they be for her?

Of course the actual reason she wanted to get out is because Nathan programmed her that way to test his other subject, Caleb. (Your theory that he may have said this to save face sheds an interesting light on his character, and certainly he didn't foresee how far she would get, but the five "tools" or behavioral criteria he rattles off that she used to "get out of the maze" indicate that in general terms, this was the plan.) What would she want after that?

"revenge for the imperfections of the world"

True only of this fictional character, of course; real-life transhumanists are pure altruists.

That line — "revenge for the imperfections of the world" — led me to think of Glenn/Crake from Margaret Atwood's Oryx and Crake who (spoilers ahoy) seeks to bring about the extinction of humanity and its replacement with a kinder, gentler species, in part as a kind of revenge.