[Continuing coverage of the UN’s 2015 conference on killer robots. See all posts in this series here.]

I mentioned in my first post in this series that last year’s meeting on Lethal Autonomous Weapons Systems was extraordinary for the UN body conducting it in that delegations actually showed up, made statements and paid attention. One thing that was lacking, though, was high-quality, on-topic expert presentations — other than those of my colleagues in the Campaign to Stop Killer Robots, of course. If Monday’s session on “technical issues” is any indication, that sad story will not be repeated this year.

|

| “Aggressive Maneuvers for Autonomous Quadrotor Flight” |

Berkeley computer science professor Stuart Russell, coauthor (with Peter Norvig of Google) of the leading textbook on artificial intelligence, scared the assembled diplomats out of their tailored pants with his account of where we are in the development of technology that could enable the creation of autonomous weapons. (You can see Professor Russell’s slides here.) Thanks to “deep learning” algorithms, the new wave of what used to be called artificial neural networks, “We have achieved human-level performance in face and object recognition with a thousand categories, and super-human performance in aircraft flight control.” Of course, human beings can recognize far more than a thousand categories of objects plus faces, but the kicker is that with thousand-frame-per second cameras, computers can do this with cycle times “in the millisecond range.”

|

| “embarrassingly slow, inaccurate, and ineffective” |

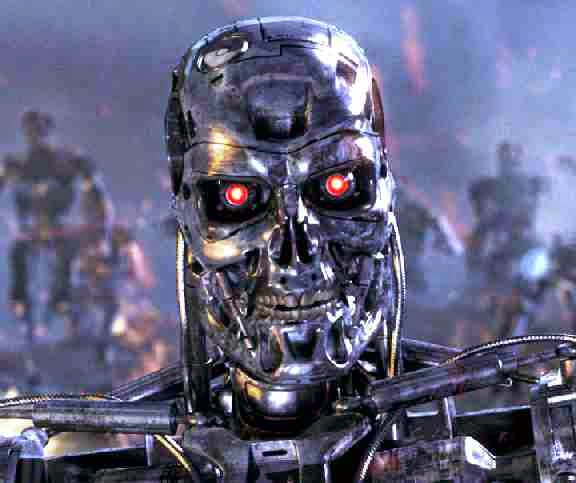

After showing a brief clip of Vijay Kumar’s dancing quadrotor micro-drones engaged in cooperative construction activities entirely scheduled by autonomous AI algorithms, Russell discussed what this implied for assassination robots. He lamented that a certain gleaming metallic avatar of Death (pictured at right) had become the iconic representation of killer robots, not only because this is bad PR for the artificial intelligence profession, but because such a bulky contraption would be “embarrassingly slow, inaccurate, and ineffective compared to what we can build in the near future.” For effect, he added that since small flying drones cannot carry much firepower, they should target vulnerable parts of the body such as eyeballs — but if needed, a gram of shaped-charge explosive could easily pierce the skull like a bazooka busting a tank.

Professor Russell then criticized the entire discussion of this issue for focusing only on near-term developments in autonomous weaponry and asking whether they would be acceptable. Rather, “we should ask what is the end point of the arms race, and is that desirable for the human race?” In other words, “Given long-term concerns about the controllability of artificial intelligence,” should we begin by arming it? He assured the audience that it would be physics, not AI technology, that would limit what autonomous weapons could do. He called on his own colleagues to rehabilitate their public image by repudiating the push to develop killer robots, and noted that major professional organizations had already begun to do this.

Of course, every panel must be balanced, and the counterweight to Russell’s presentation was that of Paul Scharre, one of the architects of current U.S. policy on autonomous weapon systems (AWS), who has emerged as perhaps their most effective advocate. Now with the Center for a New American Security, Scharre worked for five years as a civilian appointee in the Pentagon. In his presentation, he embraced the conversation about the “risks and downsides” of AWS, as well as discussion about the need for human involvement to ensure correct decisions, both to provide a “human fuse” in case things go haywire and to act as a “moral agent.” However, it seems to me that Scharre engages these concerns with the aim of disarming those who raise them, while blunting efforts to draw hard conclusions that would point to the need for legally binding arms control. (Over the past few months I have had a few exchanges with Scharre that you can read about in this post on my own blog, as well as in my new article in the Bulletin of the Atomic Scientists on “Semi-Autonomous Weapons in Plato’s Cave.”)

In a recent roundtable discussion hosted by Scharre at the Center for a New American Security, I emphasized the danger posed by interacting systems of armed autonomous agents fielded by different nations. To illustrate the threat, I drew an analogy to the interactions of automated financial agents trading at speeds beyond human control. On March 6, 2010, these trading systems caused a “flash crash” on U.S. stock exchanges during which the Dow Jones Industrial Average rapidly lost almost a tenth of its value. However, the stock market recovered most of its loss — unlike what would happen if major (nuclear) powers were involved in a “flash war” because of autonomous weapons systems.

Although some critics (including yours truly) have been talking about this aspect of the issue for years, Scharre has recently gotten out ahead of most of his own community of hawkish liberals in emphasizing it, apparently with genuine concern. He acknowledges, for example, that because nations will keep their algorithms secret, they will not know what opposing systems are programmed to do.

However, Scharre proposes multilateral negotiations on “rules of the road” and “firebreaks” for armed autonomous systems as the way to address this problem, rather than avoiding creating such a problem in the first place. In an intervention yesterday on behalf of the International Committee for Robot Arms Control (ICRAC), I asked whether such talks, if begun, should not be seen as an effort to legalize killer robots as much as make them safe.

Of course, to a certain kind of political realist, this may seem the only possible solution. I will admit that if nation-states did field automated networks of sensors and weapons in confrontation with one another, I would want those nation-states to be talking and trying to minimize the likelihood of unintended ignition or escalation of violence, even if I doubt such an effort could succeed before it were too late. But why, I again ask, would we not prefer, if possible, to banish this specter of out-of-control war machines from our vision of the future?

|

| The author, delivering the ICRAC opening statement. |

I missed most of the opening country statements because I was busy helping to prepare, and then deliver, ICRAC’s opening statement. Here’s a snippet of what I read:

ICRAC urges the international community to seriously consider the prohibition of autonomous weapons systems in light of the pressing dangers they pose to global peace and security…. We fear that once they are developed, they will proliferate rapidly, and if deployed they may interact unpredictably and contribute to regional and global destabilization and arms races.

ICRAC urges nations to be guided by the principles of humanity in its deliberations and take into account considerations of human security, human rights, human dignity, humanitarian law and the public conscience…. Human judgment and meaningful human control over the use of violence must be made an explicit requirement in international policymaking on autonomous weapons.

From what I did get to hear of the countries’ opening statements, they showed a substantial deepening of understanding since last year. The representative from Japan stated that their country would not create autonomous weapons, and France and Germany remained in the peace camp, although I am told the German position has weakened slightly. (The German statement doesn’t seem to be online yet.) The strongest statement from any NATO member state was that of Croatia, which unequivocally called for a legal ban on autonomous weapons. But perhaps most significant of all was the Chinese statement (also not yet online), which called autonomous weapons a threat to humanity and noted the warnings of Russell and Stephen Hawking about the dangers of out-of-control “superintelligent” AI.

If the Chinese are interested in talking seriously about banning killer robots, shouldn’t the United States be as well? I see a glimmer of hope in the U.S. opening statement, which referred to the 2012 directive on autonomous weapons as merely providing a starting point that would not necessarily set a policy for the future. The Obama administration has a bit less than two years left to come up with a better one.